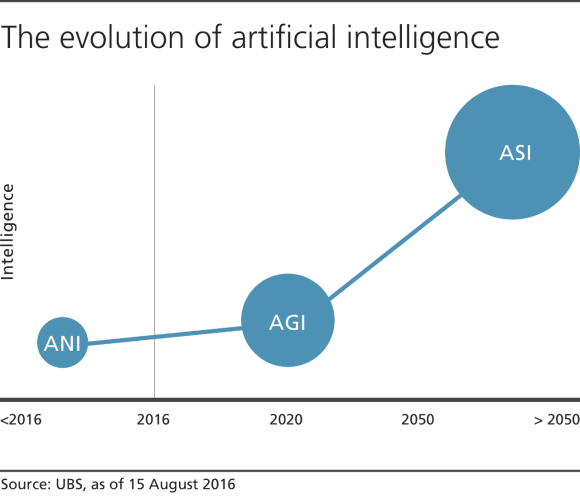

- There are three phases of AI:

- Artificial Narrow Intelligence (ANI)

- Artificial General Intelligence (AGI)

- Artificial Super Intelligence (ASI)

- The transition between the first and second phase has been the longest.

- We are in the final stages of ANI, in which the intelligence of machines and humans are equal.

More: The Evolution of Artificial Intelligence

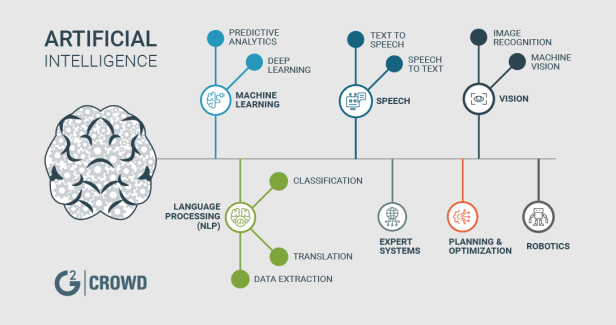

Artificial intelligence

In computer science, artificial intelligence (AI), sometimes called machine intelligence, is intelligence demonstrated by machines, in contrast to the natural intelligence displayed by humans and other animals. Computer science defines AI research as the study of “intelligent agents”: any device that perceives its environment and takes actions that maximize its chance of successfully achieving its goals.[1] More in detail, Kaplan and Haenlein define AI as “a system’s ability to correctly interpret external data, to learn from such data, and to use those learnings to achieve specific goals and tasks through flexible adaptation”.[2] Colloquially, the term “artificial intelligence” is applied when a machine mimics “cognitive” functions that humans associate with other human minds, such as “learning” and “problem solving”.[3]

In Summary, Artificial intelligence (AI) is an area of computer science that emphasizes the creation of intelligent machines that work and react like humans. Some of the activities computers with artificial intelligence are designed for include:

-Speech recognition

-Learning

-Planning

-Problem solving

The AI Revolution: The Road to Superintelligence

The road to artificial superintelligence

Artificial superintelligence is coming, probably whether we like it or not, and probably within our lifetimes. If many of the experts are correct, this will either be our greatest dream or our worst nightmare. Here’s why.

“We are on the edge of change comparable to the rise of human life on Earth.”

—Vernor Vinge

If you’re like me, you used to think artificial intelligence was a silly sci-fi concept, but lately you’ve been hearing it mentioned by serious people, and you don’t quite get it.

There are three reasons a lot of people are confused about the term “AI”:

1) We associate AI with movies. Star Wars. Terminator. 2001: A Space Odyssey. Even The Jetsons. Those are fiction, as are the robot characters, so it makes AI sound a little fictional to us.

2) “AI” is a broad topic. It ranges from your phone’s calculator to self-driving cars to something in the future that might change the world dramatically. “AI” refers to all of these things, which is confusing.

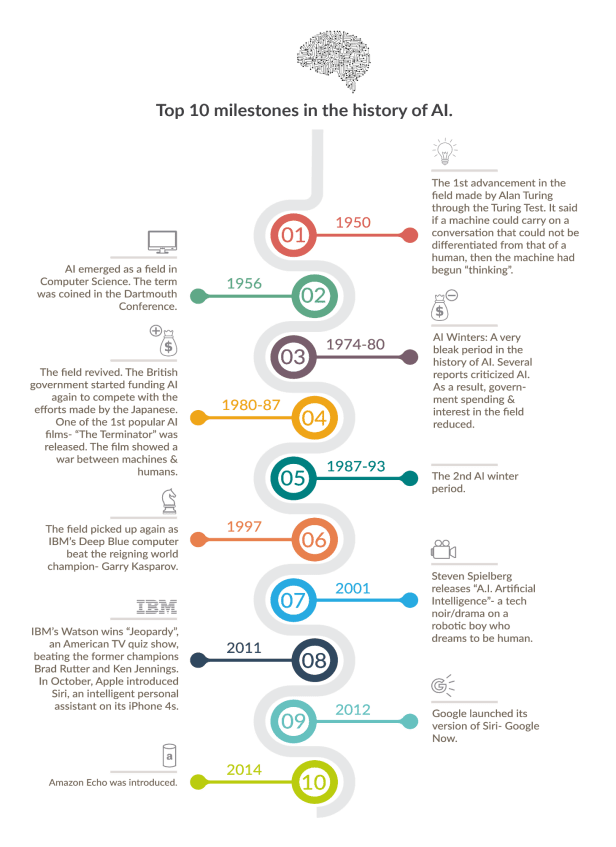

3) We use AI all the time in our daily lives, but we often don’t realize it’s AI. John McCarthy, who coined the term “artificial intelligence” in 1956, complained, “As soon as it works, no one calls it AI anymore.” Because of this phenomenon, AI often sounds more like a mythical future prediction than a reality. At the same time, it makes it sound like a pop concept from the past that never came to fruition. Ray Kurzweil says he hears people say that AI withered in the 1980s, which he compares to “insisting that the Internet died in the dot-com bust of the early 2000s.”

So let’s clear things up.

First, stop thinking of robots. A robot is a container for AI — sometimes mimicking the human form, sometimes not — but the AI itself is the computer inside the robot. AI is the brain, and the robot is its body — if it even has a body. For example, the software and data behind Siri is AI, the woman’s voice we hear is a personification of that AI, and there’s no robot involved at all.

Secondly, you’ve probably heard the term “singularity” or “technological singularity.” This term has been used in math to describe an asymptote-like situation where normal rules no longer apply. It’s been used in physics to describe a phenomenon like an infinitely small, dense black hole or the point we were all squished into right before the Big Bang. Again, situations where the usual rules don’t apply. In 1993, Vernor Vinge wrote a famous essay in which he applied the term to the moment in the future when our technology’s intelligence exceeds our own — a moment for him when life as we know it will be forever changed and normal rules will no longer apply. Ray Kurzweil then muddled things a bit by defining the singularity as the time when the law of accelerating returns has reached such an extreme pace that technological progress is happening at a seemingly infinite pace, and after which we’ll be living in a whole new world. I found that many of today’s AI thinkers have stopped using the term, and it’s confusing anyway, so I won’t use it much here (even though we’ll be focusing on that idea throughout).

Finally, while there are many different types or forms of AI, since “AI” is a broad concept, the critical categories we need to think about are based on an AI’s caliber. There are three major AI caliber categories:

1) Artificial narrow intelligence (ANI): Sometimes referred to as “weak AI,” artificial narrow intelligence is AI that specializes in one area. There’s AI that can beat the world chess champion in chess, but that’s the only thing it does. Ask it to figure out a better way to store data on a hard drive and it’ll look at you blankly.

2) Artificial general intelligence (AGI): Sometimes referred to as “strong AI,” or “human-level AI,” “artificial general intelligence” refers to a computer that is as smart as a human across the board — a machine that can perform any intellectual task that a human being can. Creating an AGI is a much harder task than creating an ANI, and we’ve yet to do it. Professor Linda Gottfredson describes intelligence as “a very general mental capability that, among other things, involves the ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, learn quickly, and learn from experience.” An AGI would be able to do all those things as easily as you can.

3) Artificial superintelligence (ASI): Oxford philosopher and leading AI thinker Nick Bostrom defines “superintelligence” as “an intellect that is much smarter than the best human brains in practically every field, including scientific creativity, general wisdom and social skills.” Artificial superintelligence is a term referring to the time when the capability of computers will surpass humans. “Artificial intelligence,” which has been much used since the 1970s, refers to the ability of computers to mimic human thought. Artificial superintelligence goes a step beyond, and posits a world in which a computer’s cognitive ability is superior to a human’s.

Artificial superintelligence ranges from a computer that’s just a little smarter than a human to one that’s trillions of times smarter — across the board. ASI is the reason that the topic of AI is such a spicy meatball, and the reason that the words “immortality” and “extinction” will both appear in these posts multiple times.

As of now, humans have conquered the lowest caliber of AI — ANI — in many ways, and it’s everywhere. The AI revolution is the road from ANI through AGI to ASI — a road that we may or may not survive but that, either way, will change everything.

Let’s take a close look at what the leading thinkers in the field believe this road looks like and why this revolution might happen way sooner than you might think.

Where We Are Currently: A World Running on ANI

Artificial narrow intelligence is machine intelligence that equals or exceeds human intelligence or efficiency at a specific thing. A few examples:

Cars are full of ANI systems, from the computer that figures out when the anti-lock brakes should kick in to the computer that tunes the parameters of the fuel-injection systems. Google’s self-driving car, which is being tested now, will contain robust ANI systems that allow it to perceive and react to the world around it.

Your phone is a little ANI factory. When you navigate using your map app, receive tailored music recommendations from Pandora, check tomorrow’s weather, talk to Siri, or engage in dozens of other everyday activities, you’re using ANI.

Your email spam filter is a classic type of ANI. It starts off loaded with intelligence about how to figure out what’s spam and what’s not, and then it learns and tailors its intelligence to you as it gets experience with your particular preferences. The Nest thermostat does the same thing as it starts to figure out your typical routine and act accordingly.

You know the whole creepy thing that goes on when you search for a product on Amazon and then you see that as a “recommended for you” product on a different site, or when Facebook somehow knows whom it makes sense for you to add as a friend? That’s a network of ANI systems working together to inform each other about who you are and what you like and then using that information to decide what to show you. The same goes for Amazon’s “People who bought this also bought…” thing: That’s an ANI whose job it is to gather info from the behavior of millions of customers and synthesize that info to cleverly upsell you so you’ll buy more things.

Google Translate is another classic ANI, impressively good at one narrow task. Voice recognition is another, and there are a bunch of apps that use those two ANIs as a tag team, allowing you to speak a sentence in one language and have the phone spit out the same sentence in another.

When your plane lands, it’s not a human that decides which gate it should go to, just like it’s not a human that determined the price of your ticket.

The world’s best checkers, chess, Scrabble, backgammon, and Othello players are now all ANI systems.

Google Search is one large ANI brain with incredibly sophisticated methods for ranking pages and figuring out what to show you in particular. The same goes for Facebook’s Newsfeed.

And those are just in the consumer world. Sophisticated ANI systems are widely used in sectors and industries like military, manufacturing, and finance (algorithmic high-frequency AI traders account for more than half of equity shares traded on U.S. markets), and in expert systems like those that help doctors make diagnoses, and, most famously, in IBM’s Watson, who contained enough facts and understood coy Trebek-speak well enough to soundly beat the most prolific Jeopardy champions.

ANI systems as they are now aren’t especially scary. At worst, a glitchy or badly programmed ANI can cause an isolated catastrophe like knocking out a power grid, causing a harmful nuclear-power-plant malfunction, or triggering a financial-markets disaster (like the 2010 Flash Crash, when an ANI reacted the wrong way to an unexpected situation and caused the stock market to briefly plummet, taking $1 trillion of market value with it, only part of which was recovered when the mistake was corrected).

But while ANI doesn’t have the capability to cause an existential threat, we should see this increasingly large and complex ecosystem of relatively harmless ANI as a precursor of the world-altering hurricane that’s on the way. Each new ANI innovation quietly adds another brick onto the road to AGI and ASI. Or as Aaron Saenz sees it, our world’s ANI systems “are like the amino acids in the early Earth’s primordial ooze” — the inanimate stuff of life that, one unexpected day, woke up.

The Road From ANI to AGI

Why It’s So Hard

Nothing will make you appreciate human intelligence like learning about how unbelievably challenging it is to create a computer as smart as we are. Building skyscrapers, putting humans in space, figuring out the details of how the Big Bang went down — all are far easier than understanding our own brain or how to make something as cool as it. As of now, the human brain is the most complex object in the known universe.

What’s interesting is that the hard parts of trying to build an AGI (a computer as smart as humans in general, not just at one narrow specialty) are not intuitively what you’d think they are. Build a computer that can multiply two 10-digit numbers in a split second? Incredibly easy. Build one that can look at a dog and answer whether it’s a dog or a cat? Spectacularly difficult. Make AI that can beat any human in chess? Done. Make one that can read a paragraph from a 6-year-old’s picture book and not just recognize the words but understand the meaning of them? Google is currently spending billions of dollars trying to do it. Hard things like calculus, financial-market strategy, and language translation are mind-numbingly easy for a computer, while easy things like vision, motion, movement, and perception are insanely hard for it. Or, as computer scientist Donald Knuth puts it, “AI has by now succeeded in doing essentially everything that requires ‘thinking’ but has failed to do most of what people and animals do ‘without thinking.’”

What you quickly realize when you think about this is that those things that seem easy to us are actually unbelievably complicated, and they only seem easy because those skills have been optimized in us (and most animals) by hundreds of million years of animal evolution. When you reach your hand up toward an object, the muscles, tendons, and bones in your shoulder, elbow, and wrist instantly perform a long series of physics operations, in conjunction with your eyes, to allow you to move your hand in a straight line through three dimensions. It seems effortless to you because you have perfected software in your brain for doing it. The same idea goes for why it’s not that malware is dumb for not being able to figure out the slanty-word-recognition test when you sign up for a new account on a site; it’s that your brain is super-impressive for being able to.

On the other hand, multiplying big numbers or playing chess are new activities for biological creatures, and we haven’t had any time to evolve a proficiency at them, so a computer doesn’t need to work too hard to beat us. Think about it. Which would you rather do: Build a program that could multiply big numbers, or build one that could understand the essence of a “B” well enough that it you could show it a “B” in any one of thousands of unpredictable fonts or handwriting and it could instantly know it was a “B”?

One fun example: When you look at the image below, you and a computer both can figure out that it’s a rectangle with two distinct shades, alternating.

The Road From AGI to ASI

At some point we’ll have achieved AGI — computers with human-level general intelligence. Just a bunch of people and computers living together in equality.

Oh actually not at all.

The thing is that an AGI with an identical level of intelligence and computational capacity as a human would still have significant advantages over humans. Like:

Hardware Speed: The brain’s neurons max out at around 200 hertz, while today’s microprocessors (which are much slower than they will be when we reach AGI) run at 2 gigahertz, or 10 million times faster than our neurons. And the brain’s internal communications, which can move at about 120 meters per second, are horribly outmatched by a computer’s ability to communicate optically at the speed of light.

Size and storage: The brain is locked into its size by the shape of our skulls, and it couldn’t get much bigger anyway, or the 120-meters-per-second internal communications would take too long to get from one brain structure to another. Computers can expand to any physical size, allowing far more hardware to be put to work, a much larger working memory (RAM), and a long-term memory (hard-drive storage) that has both far greater capacity and far greater precision than our own.

Reliability and durability: It’s not only the memories of a computer that would be more precise. Computer transistors are more accurate than biological neurons, and they’re less likely to deteriorate (and can be repaired or replaced if they do). Human brains also get fatigued easily, while computers can run nonstop, at peak performance, 24/7.

Software Editability, upgradability, and a wider breadth of possibility: Unlike the human brain, computer software can receive updates and fixes and can be easily experimented on. The upgrades could also span to areas where human brains are weak. Human vision software is superbly advanced, while its complex engineering capability is pretty low-grade. Computers could match the human on vision software but could also become equally optimized in engineering and any other area.

Collective capability: Humans crush all other species at building a vast collective intelligence. Beginning with the development of language and the forming of large, dense communities, advancing through the inventions of writing and printing, and now intensified through tools like the Internet, humanity’s collective intelligence is one of the major reasons we’ve been able to get so far ahead of all other species. And computers will be way better at it than we are. A worldwide network of AI running a particular program could regularly sync with itself so that anything any one computer learned would be instantly uploaded to all other computers. The group could also take on one goal as a unit, because there wouldn’t necessarily be dissenting opinions and motivations and self-interest like we have within the human population.

AI, which will likely get to AGI by being programmed to self-improve, wouldn’t see human-level intelligence as some important milestone — it’s only a relevant marker from our point of view — and wouldn’t have any reason to stop at our level. And given the advantages over us that even human-intelligence-equivalent AGI would have, it’s pretty obvious that it would only hit human intelligence for a brief instant before racing onwards to the realm of superior-to-human intelligence.

This may shock the shit out of us when it happens. The reason is that from our perspective, A) while the intelligence of different kinds of animals varies, the main characteristic we’re aware of about any animal’s intelligence is that it’s far lower than ours, and B) we view the smartest humans as way smarter than the dumbest humans. Kind of like this:

So as AI zooms upward in intelligence toward us, we’ll see it as simply becoming smarter, for an animal. Then, when it hits the lowest capacity of humanity — Nick Bostrom uses the term “the village idiot” — we’ll be like, “Oh, wow, it’s like a dumb human. Cute!” The only thing is that in the grand spectrum of intelligence, all humans, from the village idiot to Einstein, are within a very small range, so just after hitting village-idiot level and being declared an AGI, it’ll suddenly be smarter than Einstein and we won’t know what hit us:

And what happens after that? An Intelligence Explosion

I hope you enjoyed normal time, because this is when this topic gets abnormal and scary, and it’s going to stay that way from here forward. I want to pause here to remind you that every single thing I’m going to say is real — real science and real forecasts of the future from a large array of the most respected thinkers and scientists. Just keep remembering that.

Anyway, as I said above, most of our current models for getting to AGI involve the AI getting there by self-improvement. And once it gets to AGI, even systems that formed and grew through methods that didn’t involve self-improvement would now be smart enough to begin self-improving if they wanted to.2

And here’s where we get to an intense concept: recursive self-improvement. It works like this: An AI system at a certain level — say, village idiot — is programmed with the goal of improving its own intelligence. Once it does, it’s smarter — maybe at this point it’s at Einstein’s level — so now, with an Einstein-level intellect, when it works to improve its intelligence, it has an easier time and it can make bigger leaps. These leaps make it much smarter than any human, allowing it to make even bigger leaps. As the leaps grow larger and happen more rapidly, the AGI soars upwards in intelligence and soon reaches the superintelligent level of an ASI system. This is called an intelligence explosion, and it’s the ultimate example of the law of accelerating returns.

There is some debate about how soon AI will reach human-level general intelligence; the median year on a survey of hundreds of scientists about when they believed we’d be more likely than not to have reached AGI was 2040. That’s only 25 years from now, which doesn’t sound that huge until you consider that many of the thinkers in this field think it’s likely that the progression from AGI to ASI happens very quickly. Like, this could happen:

It takes decades for the first AI system to reach low-level general intelligence, but it finally happens. A computer is able to understand the world around it as well as a human 4-year-old. Suddenly, within an hour of hitting that milestone, the system pumps out the grand theory of physics that unifies general relativity and quantum mechanics, something no human has been able to definitively do. Ninety minutes after that, the AI has become an ASI, 170,000 times more intelligent than a human.

Superintelligence of that magnitude is not something we can remotely grasp, any more than a bumblebee can wrap its head around Keynesian economics. In our world, “smart” means a 130 IQ, and “stupid” means an 85 IQ. We don’t have a word for an IQ of 12,952.

What we do know is that humans’ utter dominance on this Earth suggests a clear rule: With intelligence comes power. This means an ASI, when we create it, will be the most powerful being in the history of life on Earth, and all living things, including humans, will be entirely at its whim — and this might happen in the next few decades.

If our meager brains were able to invent wi-fi, then something 100 or 1,000 or 1 billion times smarter than we are should have no problem controlling the positioning of each and every atom in the world in any way it likes, at any time. Everything we consider magic, every power we imagine a supreme God to have, will be as mundane an activity for the ASI as flipping on a light switch is for us. Creating the technology to reverse human aging, curing disease and hunger and even mortality, reprogramming the weather to protect the future of life on Earth — all suddenly possible. Also possible is the immediate end of all life on Earth. As far as we’re concerned, if an ASI comes into being, there is now an omnipotent god on Earth — and the all-important question for us is:

Will it be a nice god?

That’s the topic of Part 2.

Footnotes:

Kurzweil points out that his phone is about a millionth the size of, a millionth the price of, and a thousand times more powerful than his MIT computer was 40 years ago. Good luck trying to figure out where a comparable future advancement in computing would leave us, let alone one far, far more extreme, since the progress grows exponentially.

There’s much more on what it means for a computer to “want” to do something in Part 2.

More: https://waitbutwhy.com/2015/01/artificial-intelligence-revolution-1.html

Artificial Virtual Assistants (AVA)

Artificial Virtual Intelligence

An artificial artificial virtual assistant is an artifical intelligent software agent that can perform tasks or services for an individual. Sometimes the term “chatbot” is used to refer to artificial virtual assistants generally or specifically those accessed by online chat (or in some cases online chat programs that are for entertainment and not useful purposes).

As of 2018, the capabilities and usage of artificial virtual assistants is expanding rapidly, with new products entering the market. An online poll in May 2017 found the most widely used in the US were Apple’s Siri (34%), Google Assistant (19%), Amazon Alexa (6%), and Microsoft Cortana (4%).[1] Apple and Google have large installed bases of users on smartphones. Microsoft has a large installed base of Windows-based personal computers, smartphones and smart speakers. Alexa has a large install base for smart speakers.[2]

History

The first tool enabled to perform digital speech recognition was the IBM Shoebox, presented to the general public during the 1962 Seattle World’s Fair after its initial market launch in 1961. This early computer, developed almost 20 years before the introduction of the first IBM Personal Computer in 1981, was able to recognize 16 spoken words and the digits 0 to 9. The next milestone in the development of voice recognition technology was achieved in the 1970s at the Carnegie Mellon University in Pittsburgh, Pennsylvania with substantial support of the United States Department of Defense and its DARPA agency. Their tool “Harpy” mastered about 1000 words, the vocabulary of a three-year-old. About ten years later the same group of scientists developed a system that could analyze not only individual words but entire word sequences enabled by a Hidden Markov Model.[3] Thus, the earliest artificial virtual assistants, which applied speech recognition software were automated attendant and medical digital dictation software.[4] In the 1990s digital speech recognition technology became a feature of the personal computer with Microsoft, IBM, Philips and Lernout & Hauspie fighting for customers. Much later the market launch of the first smartphone IBM Simon in 1994 laid the foundation for smart artificial virtual assistants as we know them today.[5] The first modern digital artificial virtual assistant installed on a smartphone was Siri, which was introduced as a feature of the iPhone 4S on October 4, 2011.[6] Apple Inc. developed Siri following the 2010 acquisition of Siri Inc., a spin-off of SRI International, which is a research institute financed by DARPA and the United States Department of Defense.[3]

Method of interaction

Artificial artificial virtual assistants make work via:

Text (online chat), especially in an instant messaging app or other app

Voice, for example with Amazon Alexa on the Amazon Echo device, or Siri on an iPhone

By taking and/or uploading images, as in the case of Samsung Bixby on the Samsung Galaxy S8

Some artificial artificial virtual assistants are accessible via multiple methods, such as Google Assistant via chat on the Google Allo app and via voice on Google Home smart speakers.

Artificial artificial virtual assistants use natural language processing (NLP) to match user text or voice input to executable commands. Many continually learn using artificial intelligence techniques including machine learning.

To activate a artificial artificial virtual assistant using the voice, a wake word might be used. This is a word or groups of words such as “Alexa” or “OK Google”.[7]

Artificial artificial virtual assistant software alternatives to Windows 10’s Cortana

AVA Alternatives

By: Matthew Adams ▪ March 29, 2017 ▪

4 minute read

Artificial Virtual Assistant programs are AI software that can assist you with various things. You can request that artificial virtual assistants send texts or emails, provide weather details, search the web, remember phone numbers, provide dictionary definitions, open software and much more. These are also programs that you can use with microphones or chat with by entering text. There are more AI applications for mobiles than desktops, but some publishers have launched a few artificial virtual assistant programs for Windows.

Apple’s Siri was among the first digital assistants for mobiles and tablets, and Microsoft included its first artificial virtual assistant in Windows 10 and Phone 8.1. Cortana is Windows 10’s artificial virtual assistant, and that can keep a notebook for you, send emails, tell you the time in various countries, replace the Calculator app, provide football scores and fixtures, set alarms, play songs with apps, schedule dates, etc. Although it might seem little more than a search tool at first, Cortana is a lot more. However, you can still try out any of these alternative third-party artificial virtual assistants for various Windows platforms.

Braina

Braina is artificial virtual assistant software that has a freeware Lite and proprietary Pro version, which is retailing at $29. With the Lite version you can save notes, set alarms and reminders, open programs and files, open websites, play songs, get weather details and search web. The software is also a handy dictionary and thesaurus. However, the Pro version can do quite a bit more and enables users to dictate speech to text for word processing, make Skype calls, set up hotkeys to trigger custom commands, create keyboard macros and establish startup commands that automatically execute when you first open Braina.

Jarvis Light

Jarvis Lite is freeware you can add to Windows by pressing the Download Now button on its home page. This application has minimal system requirements, so it’s a good choice for more outdated laptops or desktops. With Jarvis Lite’s shell commands users can launch software, shut down Windows, play songs, open and send emails and open web pages. The software’s termination commands come in handy for closing programs and windows. In addition, Jarvis enables speech to text dictation and provides handy audio controls; and you can also set up custom voice commands with it.

Ultra Hal Assistant 6.2

Ultra Hal Assistant 6.2 is retailing at $29.95 and runs on most Windows platforms. This VA software includes 3D avatars that users can chat with, and it also has a UI that you can customize with skins. The best thing about this software is that it boasts an expansive chat database for more natural discussions. It also includes its own text editor for text dictation. Aside from that, this digital assistant can search for and open programs, tell you the weather, replace the Calculator app, dial phone numbers, start emails and provide reminders when required.

Syn artificial virtual assistant

This is a freeware artificial virtual assistant you can add to Windows 7/8/8.1/10 from this website. Syn artificial virtual assistant is another that includes 3D avatars that bring it to life. Iron Man fans will love the software’s 3D Iron Man avatar. The software’s SynEngine integrates with Skype, Google maps, Yahoo, Twitter and other web services. Syn opens programs and websites, manages contacts, checks email and plays media. The software also supports Visual Basic, C++ and C# plug-ins so that developers can further enhance it.

At the moment there aren’t many digital assistants for Windows. However, artificial virtual assistants could be the next big thing in the software industry. Hal, Syn, Denise, Jarvis and Braina are five alternatives to Cortana you can install now; and they’ll probably be lots more new artificial virtual assistant software for Windows.

Denise Artificial Virtual Assistant

Denise is VA software available for $120, and there’s no stripped down freeware version. However, the software might still be well worth it as it comes with a unique set of avatars and a GUI that Cortana can’t match. Denise has male, female and robot avatars within a real-time display system with millions of colors. Users can resize the avatars to alternative resolutions and customize the UI’s menu options.

Aside from its amazing avatars and GUI, this artificial virtual assistant has weather forecast, email, agenda, search and dictionary modules. You can use Denise as a media player to play music and video or as an app launcher to open programs with. The software supports both Spanish and English dictation to dictate text in external software. Denise can rip music from CDs and convert it to MP3 format, and it can also send photos and videos to Picasa and YouTube accounts. Kiosk Studio is one of the software’s more novel modules with which you can set up PowerPoint style presentations hosted by Denise. With a little programming, users can even integrate Denise with third-party software.

For my Superintelligence2525/Supi2525 Ecosystem, I use Denise. You will find more about Denise at the end of the article.

These four artificial virtual assistants point the way to the future

By Mike Elgan

Contributing Columnist, Computerworld | Jun 8, 2016 3:30 AM PT

We all need a little help sometimes. After all, what are cloud-based, artificially intelligent software agents for?

By now we’re all familiar with the usual suspects: Siri, Google Now, Cortana and Alexa. They introduced most of us to the idea of talking to, rather than via, a phone, computer or home appliance.

But let’s face it: These artificial virtual assistants have become boring, banal commodities. Sorry, Siri: Your jokes are stale and your evolution slow.

More than anything else, Siri, Google Now, Cortana and Alexa have left us wanting agents that can understand and interact with us better, independently take action in the real world for us — and even change our lives.

Get ready to upgrade: A new crop of artificial virtual assistants is on the way, led by Amy, Shae, Otto and Denise Each in its own way represents the future of artificial virtual assistants. One is in public beta, one is in private beta and one is a hardware prototype, but they’re coming soon, and they collectively reveal how much better artificial virtual assistants can be.

Amy

Amy does one thing really well: scheduling your meetings.

Amy is the creation of a New York startup called x.ai. Right now there’s a waiting list. But once you reach the top of the list and start using it, here’s how Amy works.

(You can choose to call your assistant Amy or Andrew, but most people call it Amy and that’s what I’ll call it for this piece.)

For now, Amy lives on the other side of an email address: amy@x.ai. (CEO Dennis Mortensen told me that the company intends to put Amy on other platforms, such as Slack and other group-chat apps, Amazon Echo and more, and that the platform shouldn’t matter.)

You simply cc: Amy’s email address on your communication about the scheduling of any meeting, and Amy takes over. Amy is “invisible software” — there’s no app to install, no website to interact with.

Amy is adept with natural language processing, which means you can use everyday language. For example, you might send an email to a colleague, copying Amy, and say: “Hey, let’s get together next week” or “Grab a bite next week?” or “We should connect.” Amy will then take action, introduce herself to the other person and, based on your calendar and preferences, suggest a time to meet.

Amy is interactive. If the person you want to meet with gets back to Amy with restrictions or additional suggestions, Amy handles all the back-and-forth that often attends the hunt for a mutually agreeable meeting time. If you want to know how it’s all going, you can send an email to Amy and ask how the meeting with so-and-so is going and Amy will reply with the current status.

If anyone wants to reschedule later, Amy handles that in the same way.

Best of all, Amy does the heavy lifting when you need to reschedule. Let’s say you decide to take a last-minute vacation. Just send an email to Amy and say: “Clear my schedule for next week.” If you’ve got 10 meetings scheduled, Amy will reach out to all 10 people to reschedule and will update your calendar.

Amy works great, according to my own tests. The assistant reliably and consistently seems to “understand” communication about meetings, and takes appropriate action.

Amy represents the future of artificial virtual assistants for two reasons. First, it’s a specialist agent, doing one thing very well.

Second, Amy is believably human. Within the confines of email conversations on the subject of scheduling meetings, Amy passes the Turing test.

In the future, human customer service operators will be aided by artificial intelligence and A.I. will be helped by humans. The public will neither know nor care whether various assistants and agents are real or artificial intelligence.

Shae

Good artificial virtual assistants look out for you. That’s why Shae is a great example of the future of artificial virtual assistants. Shae helps you get healthy by guiding and informing you about healthy living all day, every day.

The company behind Shae, Personal Health 360 (PH360), throws around some big numbers. It claims Shae uses some 500 algorithms fed by more than 10,000 data points to provide very specific help for users.

That’s a lot of data, and it comes from unexpected places. For example, family history is taken into account, individual body type and environmental factors like the weather and pollen count. Much of the health data Shae uses comes from a personalized phenotype questionnaire that each user fills out.

Shae additionally accesses both your calendar and biometrics as detected from a monitoring device like the Apple Watch to figure out what your mood might be. When it detects signs of stress such as an elevated heart rate, the app pops up a dialog to ask you if you’re feeling stressed.

Like Google Now, Shae takes the initiative to give you information, updates and advice, telling you what to eat and when to exercise, and keeping tabs on changing health data, such as your weight, body mass index (BMI, an estimate of body fat based on height and weight), lean muscle mass and other body measurements. Shae even helps you plan vacations based on your personal profile and circadian rhythm.

PH360 exceeded its initial goal of raising $100,000 in Kickstarter crowdfunding. Shae is now in private beta, with global release planned for this fall. An annual subscription will cost $197.

Over time, we’ll learn if users are thrilled with the Shae assistant. Whether Shae succeeds or fails, the service represents the future of artificial virtual assistants because of its extreme personalization and the eclectic nature of the data, integrating family history, personal health details, health knowledge, environmental data and more — and for its preemptive advocacy of habits that benefit the user.

Samsung Otto

Following the success of Amazon’s Echo device, Samsung unveiled its own artificial virtual assistant home appliance called Otto in April of this year. (Google has now followed suit with the May announcement of its upcoming Google Home appliance.)

Like the Amazon Echo, which is possessed by a artificial virtual assistant named Alexa, the Otto is an Internet-connected speaker and microphone that interacts with you via spoken conversations and can control home appliances like lights. Otto can answer questions, order products, and play music and podcasts on command.

But unlike the Echo, Otto is also an HD security camera that can stream video live to your phone or computer. The device has a kind of head that can turn, pivot and swivel to let you look around the room remotely. Otto can also recognize faces — and even has a rudimentary face of its own, displayed on a screen.

It’s based on Samsung’s ARTIK IoT platform, which Samsung recently unveiled developer tools for.

Otto is a prototype, and Samsung isn’t saying whether it will bring the appliance to market, or when. But based on my own observation of Samsung’s way of quickly bringing high-visibility prototypes to market as products, I expect Otto or a version of it to become available within the next year or so.

Otto represents the future of artificial virtual assistants not only because it is a physical home appliance, but also because it uses facial recognition. That means different members of the family could each have their own set of preferences and personal details, calendars and accounts — and Otto and other future appliances could base their interactions on the knowledge of the person they’re talking to.

Denise Artificial Virtual Assistant

Denise is VA software available for $120, and there’s no stripped down freeware version. However, the software might still be well worth it as it comes with a unique set of avatars and a GUI that Cortana can’t match. Denise has male, female and robot avatars within a real-time display system with millions of colors. Users can resize the avatars to alternative resolutions and customize the UI’s menu options.

Aside from its amazing avatars and GUI, this artificial virtual assistant has weather forecast, email, agenda, search and dictionary modules. You can use Denise as a media player to play music and video or as an app launcher to open programs with. The software supports both Spanish and English dictation to dictate text in external software. Denise can rip music from CDs and convert it to MP3 format, and it can also send photos and videos to Picasa and YouTube accounts. Kiosk Studio is one of the software’s more novel modules with which you can set up PowerPoint style presentations hosted by Denise. With a little programming, users can even integrate Denise with third-party software.

For my Superintelligence2525/Supi2525 Ecosystem, I use Denise.

The future of artificial virtual assistants is virtually here

Shae, Amy, Otto and Denise together represent the future of artificial virtual assistants, which will be specialized, personalized, thorough, preemptive, highly intelligent and optionally available in the form of dedicated physical appliances.

These three artificial virtual assistants already suggest just how helpful and, well, human our technology will become.

More about Denise for Superintelligence2525/Supi2525 your will find here:

35. Denise, an Artificial Virtual Assistant (AVA) for Superintelligence2525/Supi2525